The Pixels to Points tool takes in photos with overlapping coverage and generates a 3D point cloud output using photogrammetry methods of Structure from Motion (SFM) and Multi-View Stereovision. It can also generate an orthorectified image, individual orthoimages, and a photo-textured 3D model of the scene.

This technique uses overlapping photographs to derive the three-dimensional structure of the landscape and objects on it, producing a 3D point cloud. The resulting point cloud is sometimes referred to as PhoDar or Fodar because it can generate a similar point cloud to traditional lidar data collection. Photogrammetric point clouds generated in Global Mapper have an extra attribute labeled "Synthetic" with a Y value. Once generated, photogrammetric point clouds can be analyzed with other lidar processing tools. This tool is designed to work with sets of many overlapping images that contain geotags (EXIF data), including those collected from UAV or drone flights.

|

|

This tool requires Global Mapper Pro |

The  Pixels to Points tool is accessible from the Lidar toolbar.

Pixels to Points tool is accessible from the Lidar toolbar.

Note: The image to point cloud process is memory intensive and may take several hours to process depending on the input data and quality setting. It is recommended to perform this process on a dedicated machine with at least 16GB RAM. This tool requires a 64-bit operating system. For more information about the requirements see System Requirements.

Quick Tips for Using Pixels to Points

Press the  Pixels to Points button and choose the use the Wizard (automatic settings) or the Main Window.

Pixels to Points button and choose the use the Wizard (automatic settings) or the Main Window.

To use the Main Window:

- Load the photos into the Input Image Files section using one of the Add options in the File menu or in the context menu when right-clicking on the Input Image Files list.

- Check the input images. Un-check any images that you do not wish to use in the reconstruction. Mask parts of the input images that you do not want or may not reconstruct well, such as water or sky. If the input images have inconsistent lighting or contrast, turn on the Harmonize Color Option. You can use the options in the Map menu, such as Picture Points and Ground Coverage Polygons to check the coverage of your input images.

- Optionally, specify Ground Control Points.

- Specify the desired outputs using the check-boxes at the bottom left. Choose from:

- Point Cloud - This is the main output of the Pixels to Points tool. (A point cloud will be created automatically and embedded in the workspace if no output file is specified) Optionally choose to Create the Point Cloud by Resampling the Mesh.

- Orthoimage - Choose to create a continuous orthoimage of the scene and / or to orthorectify each individual input image.

- Mesh/ 3D Model - Create a photo-textured 3D model in GMP or OBJ format.

- Log / Stats

- Specify the output file names using the Select... button. The outputs can go to one package file or separate files for each output.

- Press Run to begin processing.

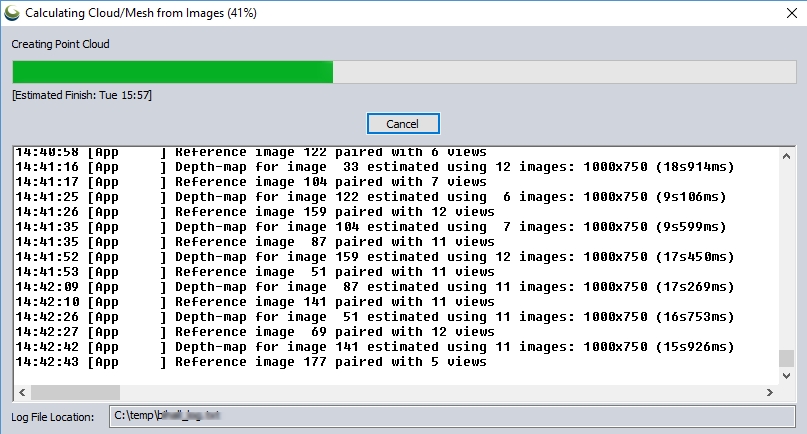

The Calculating Cloud/ Mesh from Images dialog will display the progress of the process and estimated completion.

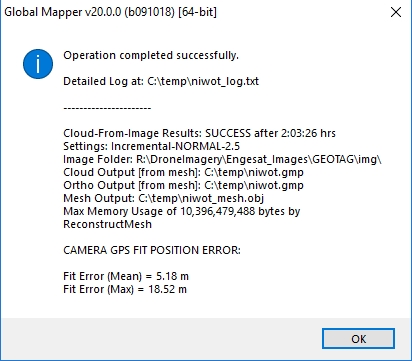

When finished, a dialog will display a summary of the log file location, settings, and location error summary.

Data Recommendations

This tool requires the input of many overlapping images. At least 60% overlap in image extents is recommended for successful point cloud generation. Evenly distributed photos taken from varying angles are also recommended. See Data Collection Recommendations for Pixels-to-Points™ for more information.

Input Image Files

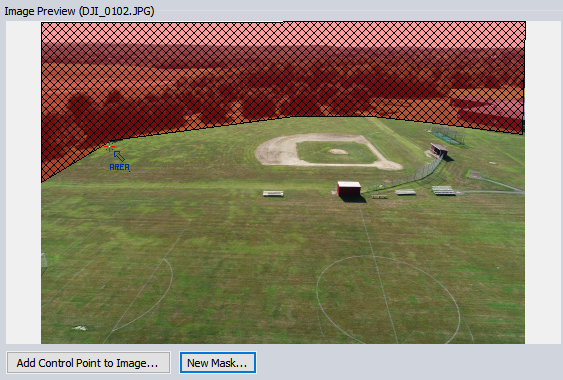

The input image files section lists the photos that will be processed by the tool. Load input images using the buttons below the input image files list. Highlight a loaded image to display it in the Image Preview window to the right of the list.

For more information about input images, see Pixels to Points - Input Image Files.

Image Preview

The Image Preview/ Ground Control Points displays the image highlighted in the input image file list. Roll the mouse wheel or left and right-click on the image to zoom in and out. Click and drag the mouse wheel to pan the image.

Add Control Point to Image...

This tool is used to mark the location of a Ground Control Point on the image. For more information, see Ground Control Points with Pixels-to-Points™.

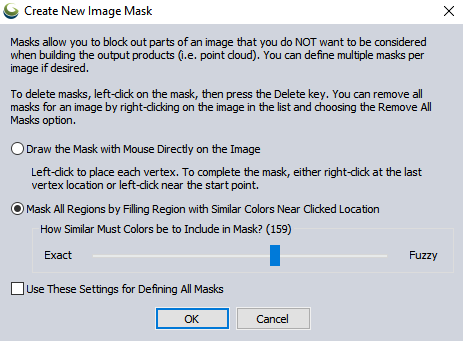

Masks can be used to cut out areas of the image that are either not of interest or disturb the reconstruction process (such as the sky in oblique photos). Using masks to focus only on the region of interest will speed up the processing time. Masks may be generated by manually drawing on the image in the Image Preview or by selecting a range of colors in the image to mask out.

For each image that you would like to mask, highlight the image in the Input Image Files List. Then press the New Mask button under the Image Preview.

To delete a mask, click it to select it, then press Delete on the keyboard to Delete it.

Draw the Mask with Mouse Directly on the Image

Use this option to draw a mask polygon on the image. After selecting OK, left-click on the mask to begin creating a polygon. Left-click for each additional vertex, then right-click to complete the mask. Use the ESC key to cancel the mask creation.

Mask All Regions by Filling Region with Similar Colors Near Clicked Location

This option uses an eyedropper tool to select a pixel in the region you would like to mask. Then adjacent pixels of similar color will also be selected based on the Fuzzy setting of the slider.

Use These Settings for Defining all Masks

Check this option to suppress the Create New Mask Image dialog and use the choice each time the New Mask button is pressed.

Output Files

A point cloud output will generate automatically and create a layer in the active workspace. Check the Save to GMP File option and enter a filename to save the point cloud output to a package file, rather than just storing it in a temp file. An orthoimage can also be generated and saved to the same file or, if so desired, a separate GMP file.

By default, the output files from Pixels to Points will be projected in the nearest identified UTM zone. The point cloud will use the vertical datum of the camera metadata (typically ellipsoidal height) or the ground control points if they are used.

Point Cloud Output

A point cloud is generated automatically. If no output GMP file is specified, it will be saved in a temporary file and loaded in the workspace. Check the Save to GMP File option and press the Select... button to specify the name and location of the output point cloud file. In the Layer Description box, specify the name of the point cloud layer when it is loaded into the workspace. The default output layer name for the point cloud is Generated Point Cloud. Point Clouds generated in Pixels to Points will have an additional attribute, SYNTHETIC = Y.

The generated point cloud is treated as a lidar point cloud and may be further processed with additional Automatic Point Cloud Analysis Tool. The point cloud will contain the RGB colors from the images and an intensity value that represents the grayscale color value (note this is not a true intensity value since there was no active remote sensing performed).

The point cloud output will contain metadata parameters reflecting the settings used in the tool. These special metadata parameters are stored in the generated output layer and are only saved in a Global Mapper package file export.

The following special metadata attributes are saved in the package files generated in the Pixels-to-Points tool.

| SFM_TYPE | The Analysis Method selected in the tool (Incremental or Global) |

| SFM_QUALITY | Quality setting |

| DENSIFY_REDUCE_POWER | Reduction during the point cloud densification process is impacted by the quality setting. This setting is only customizable through scripting. |

| CAMERA_MODEL | Camera Type setting |

| IMAGE_FOLDER | File path to the input images |

| IMAGE_COUNT | The number of input image files |

| IMAGE_PIX_COUNT | Total count of all pixels in the processed images |

| IMAGE_REDUCE_FACTOR | Reduce Image size setting |

Create Point Cloud by Resampling Mesh (3D Model)

Select this option to produce a point cloud from the mesh. This creates a less noisy point cloud. Checking this option will generate a mesh feature, whether it is saved as an output or not. This will increase the processing time. A point cloud can also be created from the saved mesh at a later point. See Create Point Cloud from Mesh

Orthoimage Output

Check the Create Orthoimage GMP file option to produce an orthorectified image layer as an additional output to the tool. This output can be saved to the same global mapper package file as the point cloud, or press the Select... button to specify a different output package file.

Note: An orthoimage output can also be created later from the generated point cloud after the tool has run, using the Create Elevation Grid tool, and selecting a Grid Type of Color(RGB).

The standard orthoimage output is calculated using a binning method of gridding, which selects the color of the highest elevation point for each output pixel. (See below for an option to generate the orthoimage from the mesh).

Resampling (for Noise Removal)

Specify the resampling method set when the orthoimage is loaded into the workspace. The default value is a Noise Filter that takes the median value in a 3X3 neighborhood. This filter impacts the display of the orthoimage and will be remembered as the display setting anytime the Global Mapper Package containing the orthoimage is loaded. The noise filter is the selected default to help reduce some of the noise in the data that may be particularly noticeable in areas where the generated point cloud is less dense or at the edges of above ground objects like buildings. The resampling method can also be changed in the layer display setting.

- Nearest Neighbor - simply uses the value of the sample/pixel that a sample location is in. When resampling an image this can result in a stair-step effect, but will maintain exactly the original color values of the source image.

- Bilinear Interpolation - determines the value of a new pixel based on a weighted average of the 4 pixels in the nearest 2 x 2 neighborhood of the pixel in the original image. The averaging has an anti-aliasing effect and therefore produces relatively smooth edges with less stair-step effect.

- Bicubic Interpolation - a more sophisticated method that produces smoother edges than bilinear interpolation. Here, a new pixel is a bicubic function using 16 pixels in the nearest 4 x 4 neighborhood of the pixel in the original image. This is the method most commonly used by image editing software, printer drivers, and many digital cameras for resampling images.

- Box Average (2x2, 3x3, 4x4, 5x5, 6x6, 7x7, 8x8, and 9x9) - the box average methods simply find the average values of the nearest 4 (for 2x2), 9 (for 3x3), 16 (for 4x4), 25 (for 5x5), 49 (for 7x7), 64 (for 8x8), or 81 (for 9x9) ) pixels and use that as the value of the sample location. These methods are very good for resampling data at lower resolutions. The lower the resolution of your export is as compared to the original, the larger "box" size you should use.

- Filter/Noise/Median (2x2, 3x3, 4x4, 5x5, 6x6, 7x7, 8x8, and 9x9) - the Filter/Noise/Median methods simply find the median values of the nearest 4 (for 2x2), 9 (for 3x3), 16 (for 4x4), 25 (for 5x5), 49 (for 7x7), 64 (for 8x8), or 81 (for 9x9) pixels and use that as the value of the sample location. This resampling function is useful for noisy rasters, so outlier pixels do not contribute to the kernel value. Some common sources of raster noise are previous compression artifacts or irregularities of a scanned map/image.

- Box Maximum (2x2, 3x3, 4x4, 5x5, 6x6, 7x7, 8x8, and 9x9) - the box maximum methods simply find the maximum value of the nearest 4 (for 2x2), 9 (for 3x3), 16 (for 4x4), 25 (for 5x5), 49 (for 7x7), 64 (for 8x8), or 81 (for 9x9) pixels and use that as the value of the sample location. These methods are very good for resampling elevation data at lower resolutions so that the new terrain surface has the maximum elevation value rather than the average (good for terrain avoidance). The lower the resolution of the export file is as compared to the original, the larger "box" size that should be used.

- Box Minimum (2x2, 3x3, 4x4, 5x5, 6x6, 7x7, 8x8, and 9x9) - the box minimum methods simply find the minimum value of the nearest 4 (for 2x2), 9 (for 3x3), 16 (for 4x4), 25 (for 5x5), 49 (for 7x7), 64 (for 8x8), or 81 (for 9x9) pixels and use that as the value of the sample location. These methods are very good for resampling elevation data at lower resolutions so that the new terrain surface has the minimum elevation value rather than the average. The lower the resolution of the export file is as compared to the original, the larger "box" size that should be used.

- Gaussian Blur (3x3, 5x5, 7x7) - the Gaussian blur methods calculate the value to be displayed for each pixel based on the nearest 9 (for 3x3), 25 (for 5x5), or 49 (for 7x7) pixels. The calculated value uses the Gaussian formula that weights the values based on the distance to the reference pixel.

Resolution

Specify the pixel resolution of the output orthoimage. This grid spacing setting can be specified using a multiple of the calculated average point spacing or using an explicit linear resolution in Feet or Meters.

See also more information about output file projection.

Create Higher Quality Orthoimage from Mesh

Check this option to create the Orthoimage from the mesh. When checked, a mesh is always created internally (even if not being saved), which will increase processing time. This option typically results in a better quality orthoimage.

Note: This can also be generated later from a saved textured mesh file. See Create Image from Mesh.

The orthoimage output will contain metadata parameters reflecting the settings used in the tool. These special metadata parameters are stored in the generated output and are only maintained in a Global Mapper package file export.

The following special metadata attributes are saved in the package files generated in the Pixels-to-Points tool.

| SFM_TYPE | The Analysis Method selected in the tool (Incremental or Global) |

| SFM_QUALITY | Quality setting |

| DENSIFY_REDUCE_POWER | Reduction during the point cloud densification process is impacted by the quality setting. This setting is only customizable through scripting. |

| CAMERA_MODEL | Camera Type setting |

| IMAGE_FOLDER | File path to the input images |

| IMAGE_COUNT | The number of input image files |

| IMAGE_PIX_COUNT | Total count of all pixels in the processed images |

| IMAGE_REDUCE_FACTOR | Reduce Image size setting (original input image reduction in resolution) |

| ORTHO_BIN_MULT | Resolution setting for the orthoimage output |

| ORTHO_GAP_SIZE | The amount of interpolation used in the gridding process to estimate pixel colors in areas of the image with no points. This setting is only customizable via scripting and will default to 8 pixels of interpolation to fill gaps |

Orthorectify Each Image Individually

This will create a layer orthorectifying each input image based on the generated point cloud. See Orthorectify Individual Images for more information.

Mesh (3D Model) Output

Select this option to create a simplified and textured 3D mesh output. This export requires additional processing time and will perform the following additional steps:

- Reconstruct Mesh— This step is performed via Delaunay Tetrahedralization of the dense point cloud.

- Refine Mesh— This step discards parts of the mesh that represent outliers in the point cloud, or large triangles generated from noise, or low density sections of the point cloud.

- Texture Mesh — This step applies a photographic texture to the mesh.

Save to Format

Specify the format of the output mesh file. The mesh can be saved as a Wavefront OBJ file or a Global Mapper Package file. From there, it can be converted into other 3D model formats via Export 3D Formats.

The Global Mapper package export will contain coordinate reference system information and other 3D model orientation settings.

The Wavefront OBJ file will have an external *.prj file that Global Mapper recognizes as the coordinate reference system to load the file. The Wavefront OBJ file is exported with a Y-Up orientation, so when loading the model, if prompted with the 3D File Import Options dialog, do not check the 'Load Z-up Model as Y-up' setting. This model is already oriented Y-Up.

The mesh file will use the same coordinate system as the generated point cloud. If no workspace coordinate system was set prior to running Pixels-to-Points, this will generate the mesh in an appropriate UTM zone. The export will also create a texture image stored in an external image file ( *.jpg), and the material will be defined in a *.MTL file.

Log/ Statistics Output

Choose to output a log and statistics file from the process. This will also contain residual error calculations. If the log/ statistics folder is selected, the log will be generated while the tool is running and saved to a temporary folder that will be listed at the bottom of the Calculating Cloud/ Mesh from Images dialog.

Ground Control Points

Ground Control Points are not required. The point cloud may also be adjusted after it is generated using rectification or shifting for horizontal adjustment. Vertical adjust can be done on the resulting point cloud using Alter elevation values for a fixed offset or the Lidar QC tool for vertical control point comparison and alignment. For more information on adding ground control points, see Ground Control Points with Pixels-to-Points™.

Options

Reduce Image Size (Faster/ Less Memory) by a Factor

This setting will downsample (i.e. reduce the pixel resolution) of the input images before processing them. The input image files will be resampled using a box average based on the scale factor size.

This setting has the greatest impact on the speed of the Pixels-to-Points tool processing. By reducing the number of pixels in the input images, the processing time is decreased exponentially. This will slightly reduce the number of points in the generated point cloud, but by a smaller factor than the initial image reduction.

Use Relative Altitude Based on Ground Height

The relative altitude setting will vertically shift the point cloud. Elevation measurements in GPS data are not extremely precise and are also typically referencing an ellipsoidal height model. This setting, therefore, overrides the elevation values by specifying a starting ground height for the first input image. For example, if the site of the launch is surveyed during UAV data collection, that precise elevation value can be set as the ground height. Subsequent calculated points in the output point cloud will then use the relative altitude to calculate the vertical positions.

The Relative Altitude value will automatically populate from terrain sources. First, it will check loaded terrain data, and if none is present, it will query the 10m National Elevation Dataset (NED), then the ASTER GDEM, and last the 30m SRTM data to recommend a ground height value for the image with an elevation (the query stops at the first valid elevation value).

Note: Obtaining the ground height from terrain data like NED, ASTER, and SRTM requires an internet connection.

Harmonize Color

Note: It is recommended to turn off automatic mode when collecting images to improve consistency across the dataset.

If the brightness, contrast, and color are not consistent across images, this option will color balance the images to match a selected reference. This can improve the reconstruction result. This setting is useful when the camera is in automatic mode and the shutter speed and aperture are different for each image. It can also sometimes help in cases where the sun or clouds changed during the data collection.

There are two steps for the Harmonize Color Option:

1. Choose from three available methods, based on your data:

-

FullFrame - uses all of each image as valid pixel selection for attempting color harmonization.

-

MatchedPoints - restricts the search area for matching points to a radius of 10.

-

VLD / KVLD - Improves feature matches in datasets that include a lot of repetition, such as facade windows on a building.

2. After selecting an option, check the image list to find an appropriately colored image, then right-click to Set the Selected Image as the Reference Image for Color Harmonization. The image will appear red in the list when it is set as the reference.

Enable Clustering

Ideal for datasets with hundreds of images, clustering allows for processing large scenes on a machine that might not otherwise have enough memory, lessening the need for image reduction. It is a processing method that divides the cameras into groups of images called clusters to perform a high resolution reconstruction on each group. Clustering works best with datasets that have high overlap.

Upper and Lower Bounds refer to the minimum and maximum number of images in each cluster. Smaller datasets or datasets with sparsely spaced imagery need a Lower Bound of at least 40 images for the best results. It is not recommended for the Upper/Lower Bounds to be lower than 80/40.

Clustering is not recommended for image sets smaller than 150 images.

Analysis Method

Specify the method used for the Structure from Motion (SfM) analysis. For more information, see Analysis Method.

Quality

The quality setting controls how much examination is done to identify matching features in the initial sparse point cloud generation (for the incremental method only), as well as the resolution used in the point cloud densification process. In most cases, the difference between normal and high quality is not significantly noticeable, but using the high or highest setting will increase the processing time.

Normal (Default)

The normal setting impacts the amount of feature identification and matching performed when first detecting feature points. This is only used in the Incremental Analysis Method. During the densification process, a setting of Normal will use half the full image resolution.

High

The high setting performs additional feature identification and matching when first calculating the sparse point cloud. The setting also uses the full image resolution during the point cloud densification and mesh creation process. A high setting requires more memory, and if the densification and mesh creation runs out of memory, it will attempt to rerun with the normal setting (half resolution images for densification and mesh generation).

Highest

The highest setting works to identify additional features generating higher resolution outputs. Like the high setting, this option uses the full image resolution during the point cloud densification and mesh creation process. Using this setting the generated mesh texture will be a higher resolution as images will not be down-sampled when applied to the texture.

Camera Type

The camera type accounts for distortion in the image. Most consumer cameras are pinhole cameras, where the image can be mapped onto a planar surface. The camera type needs to be known in order to accurately reconstruct the 3-dimensional structure. The camera type typically only needs to be modified if the camera is a Fisheye or wide field of view lens. The default value of a Pinhole Radial 3 will calculate a best-fit model with 3 parameters of radial distortion when locating the pixels in 3-dimensional space.

Pinhole - A classic Pinhole camera. Select this option if the images already have radial distortion correction applied or if the camera is designed for photogrammetry and advertizes low distortion images or distortion removal. In some cases Global Mapper will detect distortion removal in the image metadata, and automatically switch the camera type to this setting.

Pinhole Radial 1 - A classic pinhole camera with a best-fit for radial distortion defined by 1 factor to remove distortion.

Pinhole Radial 3 - A classic pinhole camera with a best-fit for radial distortion by 3 factors to remove distortion. This is the default and standard distortion correction applied to most images.

Pinhole Brown 2 - A classic pinhole camera with a best-fit for radial distortion by 3 factors and tangential distortion by 2 factors. This setting is recommended if the camera lens and sensor are not perfectly parallel, which will result in the images appearing noticeably tilted and stretched.

Pinhole with simple fisheye - Fisheye distortion defined by 4 factors. This setting should be used with fisheye and wide field of view cameras.

For more information on the types of lens distortion, see Understanding Lens Distortion.

Run

Once the input images have been loaded and all the desired settings selected, press the run button to start the conversion process.

When the run button is pressed, before beginning the processing, the application will check the expected memory requirements based on the input data and settings. Using the specified settings, if the process is expected to require more memory than the machine has available, a warning dialog will be displayed. This dialog will suggest an image reduction factor to facilitate reasonable processing of the input data based on the machine resources.

Calculating Cloud/ Mesh from Images

Once the process is running, this calculation dialog will display the progress. This will list the log file as the process is running, as well as display a progress bar and estimated finish time. The bottom of the dialog lists the path to Log File.

Results

A dialog will display the point cloud process summary when the process is complete. The output files will be loaded into the current workspace.

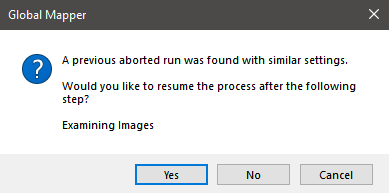

Resuming Canceled Operations

Enabling the Save Work Files to Allow Resuming Canceled Operations option allows you to cancel an ongoing Pixels to Points operation and later restart from where the process left off.

When you click RUN on a Pixels to Points process that has the same input settings and options as the canceled operation, Global Mapper will prompt you with the option to continue the aborted run from the last completed step:

This only works if the Save Work Files to Allow Resuming Canceled Operations option was enabled during the initial run.

Outputs can be added before resuming, but changing other settings will start a new process as the previously created work files will no longer apply.

Pixels to Points (P2P) Workspace file

Save your Pixels to Points workspace from the tool's file menu. A Pixels to Points (P2P) workspace file (*.gmp2p) saves all of your Pixels to Points settings, including the file path to images, and ground control point image positions. When sending a *.gmp2p file to a different machine, you will also need to include the image files.

A Pixels to Points workspace file can be loaded into Global Mapper as a normal file, or directly from the Pixels to Points File Menu.